Last Updated on February 5, 2025 by Editor

ByteDance, the parent company of TikTok, has recently unveiled OmniHuman-1, a groundbreaking AI model that transforms static images into realistic animated videos. This innovative technology leverages advanced machine learning techniques to create engaging video content from just a single image and accompanying audio. Below, we delve into the specifics of OmniHuman-1, its architecture, capabilities, and implications for the future of video generation.

Understanding OmniHuman-1

What is OmniHuman-1?

OmniHuman-1 is a state-of-the-art AI framework designed to generate high-quality human videos by combining multiple input modalities: text, audio, and images. This model stands out due to its ability to produce realistic animations from a single reference image, making it a significant advancement in the field of generative AI.

Key Features

- Multi-modal Input Processing: The model integrates various types of input data, including text descriptions, audio signals, and body poses. This allows for a more nuanced understanding of motion and speech synchronization.

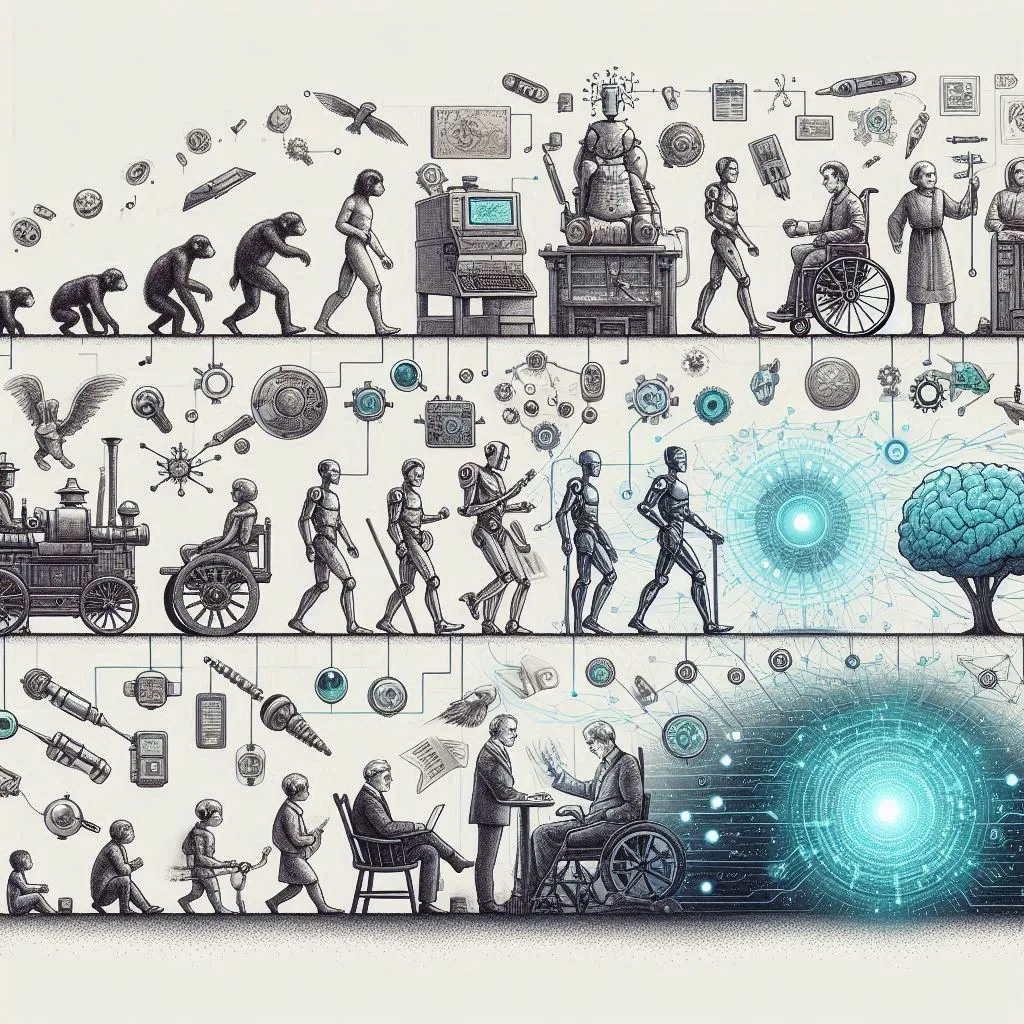

- Diffusion Transformer Architecture: OmniHuman-1 employs a Diffusion Transformer (DiT) architecture that enhances its capability to generate lifelike animations. The model utilizes over 19,000 hours of training footage, ensuring a robust understanding of human motion dynamics.

- High Fidelity and Flexibility: The system can generate videos with varying aspect ratios and body proportions, accommodating different styles and contexts. It supports both talking and singing animations, showcasing its versatility across applications.

Technical Insights

Training Methodology

OmniHuman-1 utilizes an “omni-conditions training strategy,” which effectively combines motion-related conditions during the training phase. This approach allows the model to learn from diverse datasets without losing valuable information during filtering processes. The training consists of:

- Causal 3D Variational Autoencoder (3D VAE): This component encodes video sequences into a compressed latent space for efficient processing while maintaining temporal coherence.

- Classifier-Free Guidance (CFG): This technique balances realism with adherence to input cues, allowing for more naturalistic gesture synthesis and object interactions.

Performance Metrics

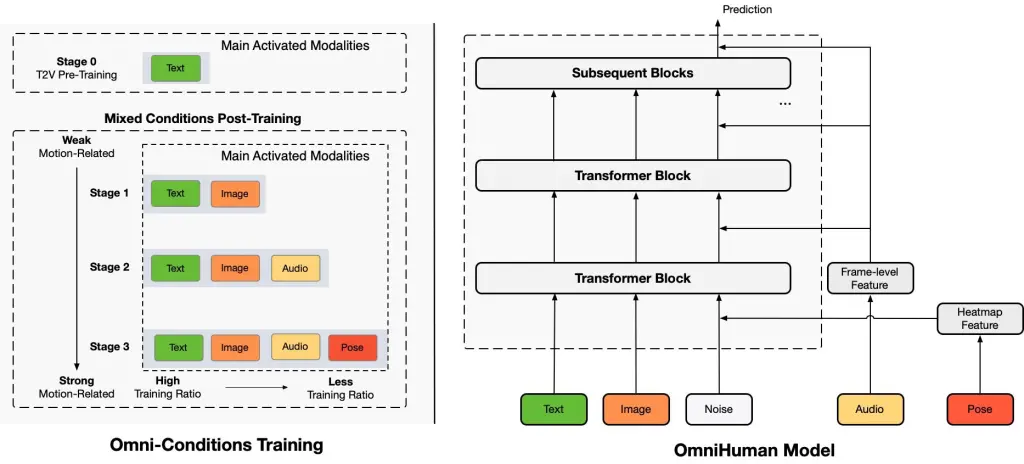

Recent evaluations indicate that OmniHuman-1 outperforms previous models like SadTalker and Hallo-3 across several key performance indicators (KPIs), including:

- Fréchet Inception Distance (FID) – The Fréchet Inception Distance (FID) is a widely recognized metric used to evaluate the quality of images generated by models such as Generative Adversarial Networks (GANs) and diffusion models. It provides a more nuanced assessment of generative models compared to earlier metrics, such as the Inception Score (IS), by comparing the distributions of generated images to those of real images.

- Frechet Video Distance (FVD) – The Fréchet Video Distance (FVD) is a significant metric in the field of video generation, designed to evaluate the quality and diversity of generated videos while considering both spatial and temporal coherence. This metric extends the principles of the Fréchet Inception Distance (FID), which has been widely used for assessing image quality, to the more complex domain of video.

- Image Quality Assessment (IQA) – Image Quality Assessment (IQA) is a crucial field that focuses on evaluating the quality of images as perceived by human observers. This assessment is vital in various applications, including image processing, computer vision, and multimedia communications. The goal of IQA is to develop metrics that can effectively measure and predict the perceptual quality of images, ensuring that they meet the required standards for clarity, detail, and overall visual appeal.

- Synchronization Accuracy (Sync-C) – Synchronization Accuracy (SyncC) is a critical metric in various fields, particularly in telecommunications and networked systems. It measures the precision with which multiple clocks or systems maintain their time alignment. High synchronization accuracy is essential for ensuring that operations across different devices or nodes occur seamlessly, reducing latency and improving overall system performance.

These metrics underscore the model’s superior ability to generate realistic human animations compared to existing technologies.

Real-world Applications

Content Creation

OmniHuman-1 has the potential to revolutionize content creation across various industries:

- Marketing: Businesses can create personalized video advertisements featuring realistic avatars that speak directly to their audience.

- Entertainment: The model can animate characters in films or video games, providing a new layer of interactivity and engagement.

- Education: Educators can utilize this technology to create dynamic instructional videos that enhance learning experiences through visual storytelling.

Ethical Considerations

While OmniHuman-1 offers exciting possibilities, it also raises ethical concerns regarding deepfakes and misinformation. As the technology becomes more accessible, discussions around AI-generated content labeling and detection tools are essential to mitigate potential misuse.

Conclusion

ByteDance’s OmniHuman-1 represents a significant leap forward in AI-driven video generation. By harnessing advanced machine learning techniques and extensive training data, this model not only enhances the realism of animated content but also opens up new avenues for creativity across multiple sectors. As we continue to explore the capabilities of OmniHuman-1, it is crucial to balance innovation with ethical considerations to ensure responsible use of this powerful technology.